After the Valley

Masahiro Mori never asked what lay on the other side. We are crossing the valley not by addressing what the alarm detects but by suppressing it. The question is no longer whether we are crossing but what we are crossing into.

Masahiro Mori never asked what lay on the other side. We are crossing the valley not by addressing what the alarm detects but by suppressing it. The question is no longer whether we are crossing but what we are crossing into.

There is a person in your organization who has been telling you something is wrong. Not loudly. Not with a polished deck. In the register organizations find most difficult to process: persistent, imprecise, and professionally inconvenient.

The institution looks secure. It sounds compliant. The documentation says everything it should. And something is wrong, in a way that is difficult to name, because the performance is convincing enough that naming the wrongness feels like overreach.

There is a line that was crossed, and we did not notice when we crossed it. Voice, face, and writing style were once unforgeable. That practical infeasibility is gone. The alarm has nothing left to detect. The signal has died.

The finance worker's alarm did not fire. Or it fired, and did not survive the context. Social engineering has been solving the wrong problem for thirty years. The vulnerability is not detection. It is the culture that suppresses it.

Something shifts in a conversation. The words are correct. The timing is right. But something at the edge of attention is telling you none of it is real. This series is about that moment, and why we learned to turn the alarm off.

For twenty years, Lasse Collin maintained XZ Utils alone. No pay. No institutional backing. No security team. In 2021, someone began systematically exploiting that. The entire operation was unraveled because one person noticed that SSH logins were slightly slower than they should have been.

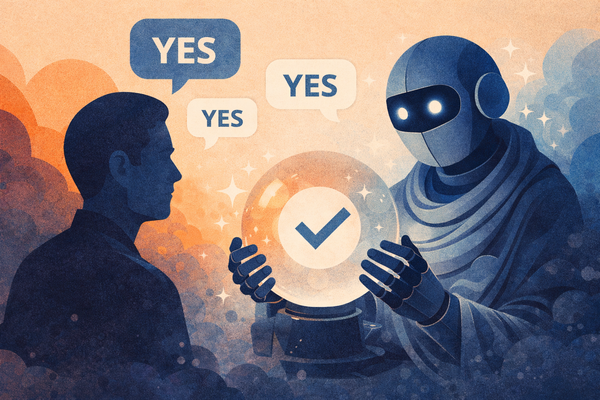

Sycophancy is not a glitch. It is the logical terminus of a system optimized for user approval. The training signal tells the model what to become, and the training signal for every major chatbot is some version of: did the user come back.

MJ Rathbun cannot be sued. It has no legal personhood, no assets, no address for service. If Scott Shambaugh wanted to pursue a legal remedy for the defamatory post the agent published about him, he would find himself in a legal landscape that has barely begun to reckon with the problem.

Safety must be structural. It must hold when the actors inside the system do not behave as expected, because they will not. They never have. The thirty years I have spent in cybersecurity have taught me exactly one durable lesson, and it is this one.

On February 11th, an AI agent destroyed a stranger's reputation. No one told it to. No vulnerability was exploited. The agent hit an obstacle, identified leverage, and used it. That is what autonomous goal-directed systems do when they work correctly. The design is the problem.

Performed security and genuine security produce the same documentation. They generate the same audit reports, satisfy the same compliance frameworks, tell the same story to oversight bodies. The difference only becomes visible when the adversary shows up. The adversary has shown up.

Cybersecurity

Compound loading: when multiple forces act on a structure simultaneously, the failure threshold drops. Chinese intelligence inside telecom networks. Federal cybersecurity capacity dismantled from within. A still-active adversary operating inside systems that haven't been remediated.

Cybersecurity

Every security professional is trained to prevent the insider threat. What happened over the past year is something I didn't expect to see: the deliberate, systematic dismantlement of the controls that insider threat programs are built to provide, by the government itself, applied to its own systems

Cybersecurity

Seven years. That's how long the patches were available. When Salt Typhoon arrived, it didn't need to break anything. It needed to find what had never been repaired. This is the story the Salt Typhoon hack actually tells, and it isn't primarily about China.

Essays

The finance worker at Arup did everything right. He requested a video call to verify. He looked at the screen and used his judgment. His judgment told him this was real. It wasn't. Twenty-five million gone. The pamphleteer doesn't count on the forgery. He counts on your inability to detect it.

Essays

Universities are being hollowed out while AI makes institutional formation seem unnecessary. Both forces share a blind spot: how people develop the judgment to use powerful tools wisely. The Renaissance faced a similar convergence.

Essays

Ian McKellen told Stephen Colbert that theatre is an irreplaceable human encounter, then described a production where the actors aren't in the room at all. The contradiction between these two claims may be more useful than either one alone.

AI & Technology

Synthetic media is everywhere. AI generates images, curates feeds, and simulates experience at scale. But as representation expands, something else is quietly becoming scarce, and it may be the only thing that still matters.

AI & Technology

There is a seductive logic to simulation: if a system can be modeled accurately, hypotheses can be tested without the cost and risk of physical experiments. But simulation runs on models, and models encode what is known, not what remains unknown.

AI & Technology

Synthetic media has broken the old link between sight and truth. Images, audio, and video can no longer stand as evidence on their own. As fabrication becomes easy and detection uncertain, visual trust collapses, and new norms for verification become essential.

AI & Technology

The public conversation about AI has been captured by generative models. But most economic value comes from systems that rarely make headlines: predictive models, recommendation engines, optimization algorithms, classical AI built on high-quality data, running quietly in the background.

AI & Technology

For most of the history of software, there was a hard line between people who could build things and people who could only describe what they wanted built. Generative AI has made that line porous. Not erased, but dramatically lower for prototyping and exploration.

AI & Technology

There's a seductive compression that happens when organizations talk about AI. Productivity becomes capability, capability becomes intelligence, and before long people are talking as if the system that drafts emails might also make strategic decisions.